Every successful digital marketer has one thing in their back pocket that beats intuition, trends, and even experts’ opinions down the line. That tool is A/B testing.

This post explains what is A/B testing in digital marketing and why it is a must-have technique when it comes to digital marketing, and the practical usage with some examples. And by the end, you’ll have everything you need to know to start applying what is A/B testing in digital marketing to make data-driven decisions that enhance outcomes and increase ROI.

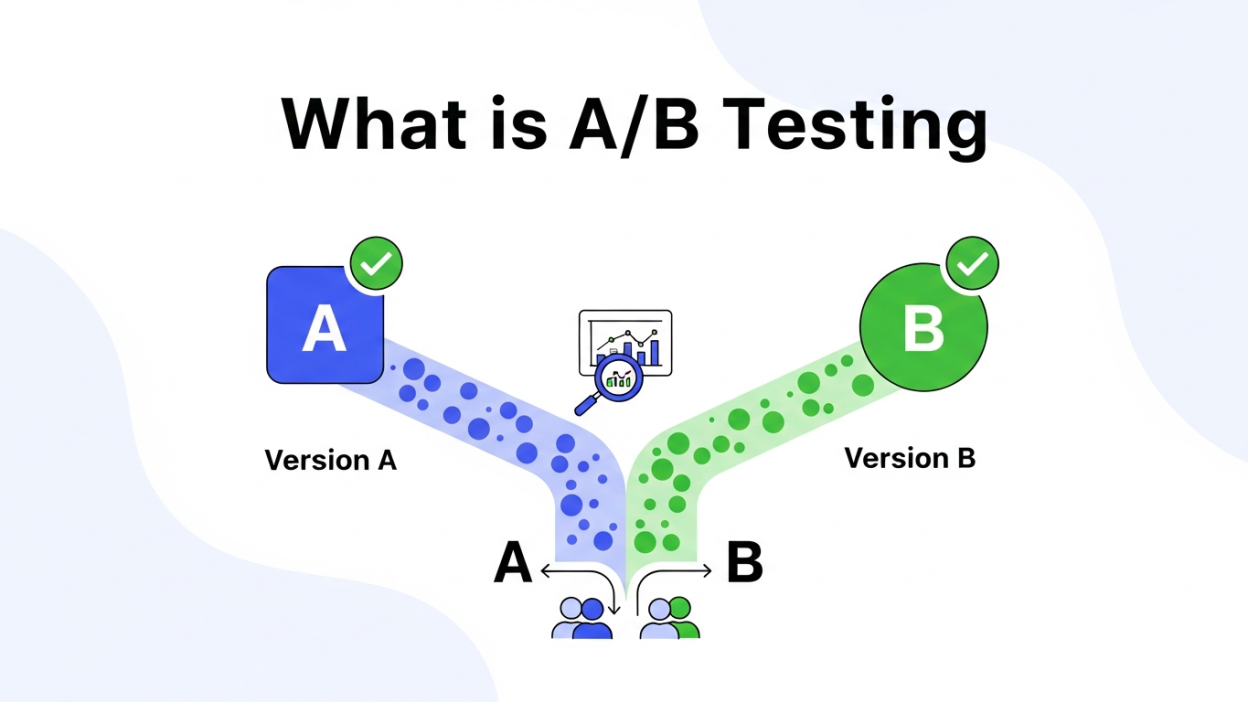

What Is A/B Testing

A/B testing (or split testing) is an experimental method in marketing in which you compare two versions of a marketing element and figure out which one performs better. These components can be website designs, ad creatives, email headlines, CTA buttons, and so on.

Take, for example, whether a red CTA button or a blue one drums up more clicks. You’d create two different variations of the same landing page, with one that has the red button and the other that has a blue one. Each version is presented to a different group within your audience, and the performance (click-through rate, conversion, and so on) is tracked to determine which button will help you reach your goal.

This easy, straightforward process eliminates the need for guesswork when creating marketing strategies online.

Building a consistent presence is key—find out how to manage your online brand or reputation effectively for long-term impact.

Why A/B Testing is Essential for Digital Marketing

Data-Driven Decision Making

No longer are the days when marketers simply relied on the “gut feeling.” A/B testing empowers you with real results so you can confidently make decisions that work.

Optimize Performance Metrics

A/B testing makes the biggest improvements in key performance indicators (KPIs) like conversion rates, click-through rates, bounce rates, and engagement metrics by optimizing the smallest of деталей.

Know Your Audience Preferences

You never can predict what an audience is going to do. Your audience’s likes and dislikes almost always surprise even the most seasoned of marketers. A/B testing reveals that and permits you to respond.

Enhance ROI

A/B tested enhancements directly convert into more ROI. More effective campaigns translate to both more efficient spending as well as better results.

Minimize Risk

Trying out a new idea or campaign can be a gamble. A/B testing enables you to pilot your changes and reduce risk before going global.

How to Conduct A/B Testing

Define Your Goal

There’s a reason why you’re setting up an A/B test in the first place, and you want to know why that is before you start. Is it to boost the number of email opens and reduce bounce rates, and/or achieve optimal ad spend? If you can set a defined goal, it will guide your choices.

Sample Goal: Boost CTR for your homepage CTA.

Identify the Element to Test

Only test one variable at a time. Whether it’s a headline, subject line, button color, or product image, focusing on just one element means that results can be unambiguous and highly actionable.

For example, if you both the CTA design and homepage headline at once, you don’t know which one caused that performance change.

Create Two Variations

Make two or more versions of the thing you’re testing. Leave everything else the same except the variable you are considering.

- Example Variation A (Control): “Sign Up Today” CTA in red

- Example Variation B (Test): green “Join Us Now” CTA

Divide Your Audience Equally

Cut your audience in half, randomly. Make sure that each variation is shown to the same number of visitors or users to avoid demographic or behavioral bias.

Run the Test Simultaneously

Timing matters. Both versions can run at the same time to adjust for outside factors (such as the time of day or season of the year) so that your results don’t become skewed.

Determine the Results and Analyze Them

When your tests have generated a sufficient amount of data, assess the performance of each variation using your goal metric (i.e., clicks, conversions, open rates).

Leverage A/B testing tools such as Google Optimize, Optimizely, or VWO to collect end-to-end analytics and reporting.

Act on Your Results

Use what you learn and apply the variation that outperformed in your campaigns. Do these over and over again to get more and more optimized.

From Guesswork to Growth: Why Testing Culture Matters

A/B testing is not just a tactic—it’s a mindset that shifts marketing from assumptions to evidence. Brands that consistently test outperform competitors because they rely on patterns, not opinions. When teams adopt a testing culture, every campaign becomes an opportunity to learn. Small insights compound over time, leading to steady growth rather than sporadic wins. This approach aligns closely with advanced marketing models like Predictive Account-Based Marketing, where historical behavior helps anticipate future actions. Instead of reacting late, marketers can prepare smarter variations in advance. A testing-first culture encourages curiosity, accountability, and continuous improvement, ensuring that decisions are backed by performance data rather than internal debates or short-lived trends.

Understanding User Psychology Through Experimentation

Behind every click, scroll, or conversion is a psychological trigger. A/B testing helps uncover what truly motivates users—urgency, trust, clarity, or emotion. By testing variations in wording, layout, or visual hierarchy, marketers can better understand how users process information and make decisions. Over time, these insights build a deep behavioral map of the audience. This is especially valuable when aligning campaigns with a Data-Driven ABM Strategy, where personalized experiences depend on accurate behavioral signals. Instead of guessing why users behave a certain way, experimentation provides direct answers. Understanding psychology through testing leads to more persuasive messaging and stronger emotional connections with your audience.

Scaling Campaigns Without Losing Accuracy

One common challenge marketers face is scaling campaigns while maintaining performance quality. What works for a small audience doesn’t always translate seamlessly to larger segments. A/B testing reduces this risk by validating changes incrementally before full-scale rollouts. This controlled approach ensures growth doesn’t come at the cost of efficiency. As campaign complexity increases, automation becomes essential. Tools that support Data Entry Automation help streamline data collection and reporting, allowing marketers to focus on insights rather than manual tracking. When testing is embedded into scaling efforts, brands can expand confidently, knowing that every decision has already been validated with real user data.

Improving Customer Experience Across Touchpoints

Customer experience is shaped by multiple interactions, not a single page or email. A/B testing allows marketers to optimize each touchpoint—ads, landing pages, emails, and follow-ups—so the journey feels seamless. Even minor improvements, such as clearer navigation or better microcopy, can significantly reduce friction. By testing consistently, brands ensure that customer experience evolves alongside user expectations. This approach prevents outdated designs or messaging from hurting conversions. Over time, testing helps create a cohesive experience where every interaction feels intentional, relevant, and easy, increasing satisfaction and long-term loyalty without requiring drastic redesigns.

Reducing Wasted Spend Through Smarter Optimization

Marketing budgets are finite, and inefficient campaigns drain resources quickly. A/B testing helps identify which elements contribute to results and which ones waste spend. Instead of increasing budgets blindly, marketers can optimize existing assets for better performance. Testing ad creatives, landing page headlines, or offer structures ensures that money is allocated to what works. This disciplined optimization minimizes losses and improves cost efficiency. When every improvement is validated, brands gain more value from the same investment. Over time, this creates a leaner marketing engine where spending decisions are driven by evidence, not trial-and-error guesswork.

Aligning Teams with Objective Performance Metrics

One underrated benefit of A/B testing is internal alignment. Marketing, design, and leadership teams often have differing opinions on what works best. Testing replaces subjective debates with objective data. When results are clear, decision-making becomes faster and more collaborative. Teams rally around performance metrics rather than personal preferences. This transparency builds trust and accountability across departments. Everyone understands why a change was made and how it impacts results. Over time, this shared reliance on data fosters a healthier workflow, where experimentation is encouraged and learning from failure is seen as progress rather than a setback.

Turning Insights into Long-Term Strategy

The real value of A/B testing lies beyond individual wins. Each test contributes to a growing knowledge base about your audience, messaging, and design principles. When documented properly, these insights inform future strategies and reduce repetitive mistakes. Patterns emerge—what tone converts best, which layouts retain users, or which offers trigger action. These learnings shape long-term planning and brand consistency. Instead of starting from scratch with every campaign, marketers build on proven frameworks. This cumulative intelligence transforms A/B testing from a tactical exercise into a strategic advantage that continuously strengthens marketing performance.

A/B Testing Best Practices

Test One Variable at a Time

Avoid the temptation to mess around with multiple variables in the same experiment. By separating a single change, you can see clearly the impact it made.

Allocate Enough Time

Ensure you collect enough data by giving your test enough time to see meaningful results. The risk that a test is cut short too early, and then draws the wrong conclusion, is real.

Preserve the Significance of the Statistical Model

Leverage statistical significance calculators to make sure that your findings are not just a matter of luck.

Segment Your Audience

And, if you can, divide your audience further into categories like age, location, or behavior. Lessons from these sections can be especially potent.

Avoid Confirmation Bias

During the test, approach it with an open mind. Believe the data, even if you arrive with preconceptions.

Examples of A/B Testing in the Real World

Every now and then, the tiniest of shifts can prove seismic. Here are a few ways brands leveraged A/B testing to improve their efforts.

- Spotify employed A/B testing to refine their onboarding email subject lines, raising open rates by more than 30%.

- HubSpot tested button copy on a landing page and discovered that making the CTA copy a first-person, single-action (“Get My Free Report”) resulted in a 14% higher conversion rate.

- Amazon uses A/B testing a lot for home page layout and product recommendations to stay ahead in e-commerce.

The Future of A/B Testing

As AI and machine learning continue to develop, A/B testing is becoming multivariate testing. AI-based tools are able to simultaneously analyze many variables to predict outcomes more quickly than traditional test methods. Keeping informed of these developments will allow marketers to run an even more effective campaign.

Have questions about A/B testing or want to know how to get started in your own campaigns? By the way, let us know in the comments—we’d be happy to help you pick a good spot!

Frequently Asked Questions (FAQ)

What is the ideal sample size for A/B testing?

There is no fixed number, but your sample size must be large enough to reach statistical significance. Small sample sizes can lead to misleading results. Factors like current traffic, conversion rate, and expected improvement all affect the required size. Many A/B testing tools include built-in calculators to estimate how much traffic and time you need. As a rule of thumb, higher traffic allows faster and more reliable testing.

How long should an A/B test run?

An A/B test should run long enough to account for natural user behavior cycles, such as weekdays vs weekends. Typically, tests should run for at least one to two weeks, or until statistical significance is achieved. Stopping a test too early can result in false winners. Consistency matters more than speed when drawing accurate conclusions.

Can I test more than two variations at once?

Traditional A/B testing compares two versions, but you can test multiple variations using multivariate testing. However, testing more variations requires significantly more traffic to maintain accuracy. For beginners or low-traffic websites, sticking to simple A/B tests is more practical and reliable before moving to complex experiments.

What elements should I prioritize for A/B testing?

High-impact elements should always come first. These include headlines, call-to-action buttons, landing page layouts, pricing displays, email subject lines, and product images. Testing minor design changes before optimizing core conversion elements often leads to slower results. Focus on areas that directly influence user decisions and revenue.

Is A/B testing only useful for large businesses?

Not at all. A/B testing is valuable for businesses of all sizes. Small businesses benefit just as much—sometimes more—because even small improvements can significantly impact revenue. With affordable tools and simple setups, startups and small brands can make data-driven decisions without large budgets.

What is the biggest mistake marketers make with A/B testing?

The most common mistake is testing too many variables at once or ignoring statistical significance. Another major issue is confirmation bias—choosing results that “feel right” instead of trusting the data. Successful A/B testing requires patience, discipline, and a willingness to let data guide decisions, even when results are unexpected.